After struggling for decades to make a general-purpose declarative #programming language that "just works" (aside from specific use cases), it seems #LLMs might be the technology that finally makes that happen

🔎 Fundstücke zum Thema „Künstliche Intelligenz in der philosophischen Hochschullehre“

✍️ von Anne Burkard (Göttingen), David Lauer (Kiel), @davidloewenstein (Düsseldorf), @almut_w (Bielefeld)

🤖 Beitrag zum gleichnamigen Themenschwerpunkt #KI #LLMs

👉 jetzt auf lehrgut.org

re software "laundering": I just call it “sampling” software now. I have a great collection of samples for many situations I built up crate digging, and now I can make tracks pretty quickly, layering on a little solo when I feel like it. Depending on the situation (say, who this software is for, how much I "transformed" the samples) it'll feel like something I just straight out borrowed, but it'll also feel like mine at some point.

It feels eerily similar in terms of its ethical and creative implications. We'd lose a lot of music if we straight out banned sampling. And sampling royalties are now mostly corporate owners of catalogs pushing money amongst each other.

Reuters has done an outstanding job covering a lot of corporate dark patterns and practices in the AI space, especially at Meta and a lot of health tech companies. Now we know why Zuck was so keen on dissolving Meta's Responsible AI unit. It was holding back product. Their product is basically cigarettes for the most vulnerable of human minds. Shameful behaviour that hurts this space. #meta #ai #LLMs #tech

So I now have "render surfaces" which are basically a render loop around a runtime session and it's loading a runtime bundle which is javascript which was generated by dropping the render surface into the AI chat and it turned into a reflection tool call and then you can also mount the render surface into a documentation render surface to show its docs and also make a kanban render surface.

I don't even know what I'm saying, but it's more and more starting to resemble familiar concepts, so I know I'll get there.

we have liftoff!

the inkling of a shell, separation of render surfaces, code bundles, hypercard sessions, raw js sessions, a full repl with autocomplete, reflection on docs, code editor.

If only i had persistence...

starting to get a bit more worried about llm supply chain attacks so took a few of the best practice articles I could find out there and of course vibecoded a little validation framework.

I use the SICP idea of using language as an abstraction builder, so use go to do the annoying scaffolding, provide GH API primitives, workflow yaml parsing primitive, and output format rendering, then pass those to a JS VM and do the actual work there.

I get full separation, and the abstraction layer makes the code small and easy to review.

Now obviously I will need some time to read the articles and understand what this is all about, but I have a serious toolset (including a REPL!) to get work done.

GenAI risk management: limit #GitHub exposure and switch to #sourcehut where @drewdevault has committed to protecting developers. #Microsoft allegedly trained GitHub #Copilot on #copyleft material code, when we all know #RAG #LLMs cannot cite like humans. Potentially massive IP violation. My PhD is #ccbysa and if the RAG LLMs can't abide by the terms, then the people who are using them are in violation. And yes, I will enforce terms. There's pending case law for this. Lots and lots of it!

In the light of discussions in the #Clojure community and also addressing a section in yesterday’s #ClojuristsTogether newsletter:

My experience using LLM coding agents + Clojure has been stellar. It's a great mach.

Imo this is due to a combination of

1) the REPL giving a coding agent immediate feedback and it can evaluate code in the running instance’s context

2) language stability and simplicity: no confusion about new vs. old language contructs, hardly any hallucinations

What BullshitBench does is subject language models to "nonsense" questions, just to see if the language model will *tell* you your question is nonsense, or if it will treat the nonsense question as a real question and give you a nonsense answer.

https://petergpt.github.io/bullshit-benchmark/viewer/index.v2.html

Fun playing with typechecking within the inference loop of an #llm and ast driven kv cache snapshotting. Locally, ram is the bottleneck, so you can easily do speculative decoding and validate with not just static analysis, but running code in an isolated vm as it gets generated and rewind to the previous function or other semantically meaningful unit. So much to be done in oss #llms.

"We don't need software engineers anymore; AI agents can generate the code from specifications. We only need people who can write a precise specification that can be understood by an agent."

What you need is a software engineer, then.

true #vibecoding gives you no copyright to the end result, and at the same time exposes you for unknown liability for #copyright infringement. and if you promised to transfer copyright to the results to a client of yours, there's also a legal defect.

using #llms as dumb-ish auto-complete is mostly fine, but giving it autonomy is like copypasting random stuff from the internets and hoping for the best.

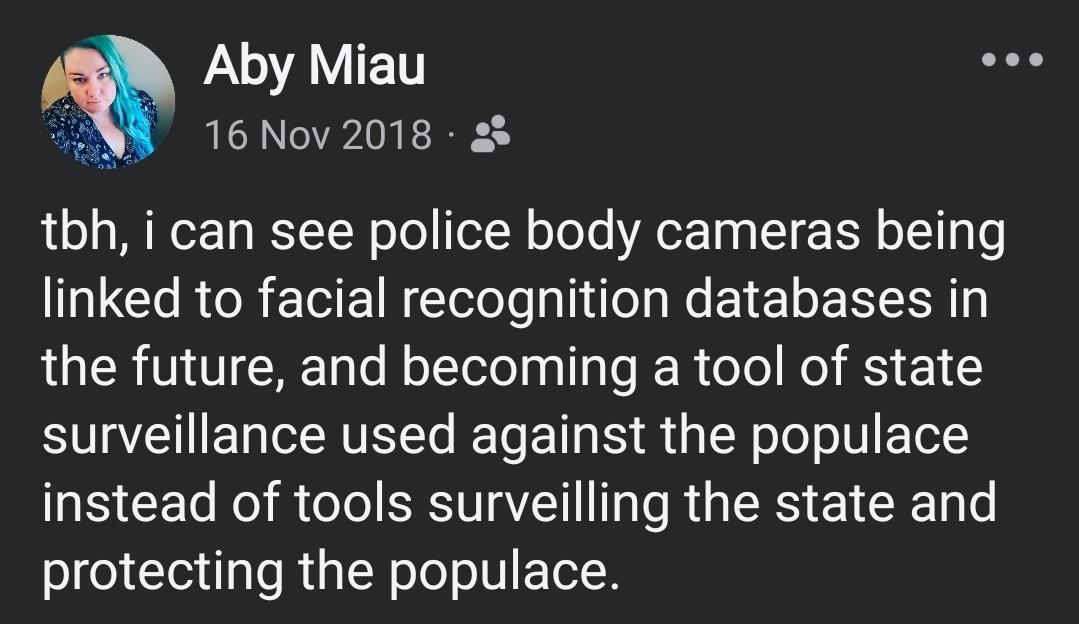

look im gonna need some of you all to start listening to me

_____

DHS BUILT A FACE SCANNING APP. YOU MIGHT ALREADY BE IN IT.

They came to his workplace armed with guns, gas canisters and artificial intelligence. He fought back with his quick wit and street smarts.

What happened next is a preview of what routine face scans could look like on American streets, in this special France 24–Mother Jones report.

Abdikafi Abdurahman Abdullahi, known as Kafi, is one of the few people willing to speak publicly about being subjected to the Department of Homeland Security’s new facial recognition tool, Mobile Fortify.

The Somali-American engineer-turned-Uber driver was waiting for a fare in an airport rideshare lot on January 7th, just hours after Renee Good was shot and killed by federal agents. As he watched a video of her death on his phone, there was a knock on his car door. Outside stood roughly a dozen ICE agents, demanding proof of his citizenship.

Kafi, who is Black and Muslim, refused to show his ID, arguing he was being racially profiled. Instead, he began filming, and his unflappable, mischievous comebacks transformed his video into a viral sensation.

The Department of Homeland Security officially acknowledged the existence of Mobile Fortify in January. But by then it had already been used over 100,000 times in American communities, according to recent court filings.

“This is taking a big and very scary step toward a kind of totalitarian checkpoint society that we have always professed to abhor here in the United States,” warned ACLU attorney Nate Wessler

https://youtu.be/fDsYzd4ITq0?si=MuhIisCXI0gwRbsF

#tech #technology #surveillance #surveillanceTech #AI #chatgpt #LLMs #policing #HumanRights #RightToPrivacy

You might know I left my previous job to set out on my own. I started "scapegoat consulting LLC", motto "we take the blame", although I just point at the AI and say "claude did it".

Before you all click away, I've always tried to be nuanced about #llms, right since copilot alpha. My posts from 2023 have help up pretty well: https://the.scapegoat.dev/llms-will-fundamentally-change-software-engineering/ - https://the.scapegoat.dev/llms-a-paradigm-shift-for-the-pragmatic-programmer/

I offer:

**programming with AI workshops**

hands on instructions, at a team level: how does a team building software benefit most from LLMs, tailored to your exact needs, since the technology is so fluid. No tools, no cargo cult. I think where to use LLMs should come from understanding team communication first. Who cares if you can barf out millions of tokens, when maybe the most valuable use is just formatting bug reports properly?

See: https://www.youtube.com/watch?v=zwItokY087U and https://github.com/go-go-golems/go-go-workshop/

_advanced_ programming techniques you will not usually find anywhere else (not just ASTs and DSLs 🤣 )

For example: https://www.youtube.com/watch?v=4GSShoBLZF0

_fundamentals_: focusing on the cultural and semiotic aspects of the technology. Software is language humans created to better communicate about how we work with machines, and now we "taught" machines to talk to themselves, but using our words.

Recent article: https://the.scapegoat.dev/tool-use-and-notation-as-generalization-shaping/

**strategic AI consulting** (cringe...)

high level "what is engineering in a world of LLMs", which some of you know I think a lot about and am a bit scaringly on point usually. My focus has always been on human collaboration and output value and quality, not productivity.

I've always been in the trenches with logistics, embedded, supply chain, manufacturing and so have a pretty wide "systems thinking" approach to solving problems with #LLMs that is quite different from many of the platitudes you'll always hear.

**project work**

My heart is in building high quality software, and that's always been my guiding star, before and after LLMs. I like building reliable solutions that take a real beating in the field. Embedded, full stack, I've been doing this 27 years professionally, you name it I've probably seen it.

Current opensource projects: https://github.com/go-go-golems/

Feel free to dm!