"ollama load balance" kontu 1 baino gehidxaugaz:

New update for the slides of my talk "Run LLMs Locally":

Now including Reranking, Qwen 3.5 (slower than Qwen 3, but includes Vision) and loading models with Direct I/O.

https://codeberg.org/thbley/talks/raw/branch/main/Run_LLMs_Locally_2025_ThomasBley.pdf

#llm #llamacpp #ollama #stablediffusion #gptoss #qwen3 #glm #opencode #localai #mcp

I believe it's time to retire my #selfhosted #Ollama instance. My #RTX3080 can handle some models, but not the models (and sizes) that I actually need/want to use. Perhaps I can slap that 3080 into another rig for gaming or something...or maybe I can try using it for ComfyUI or image generation related.

One more update for the slides of my talk "Run LLMs Locally":

Now including text to speech with Qwen3-TTS and Model Context Protocol.

https://codeberg.org/thbley/talks/raw/branch/main/Run_LLMs_Locally_2025_ThomasBley.pdf

#llm #llamacpp #ollama #stablediffusion #gptoss #qwen3 #glm #opencode #localai #mcp

python: ollama rag example

https://gist.github.com/ZiTAL/6f322f6778df83f7fe4b98f16bd15279

I updated the slides for my talk "Run LLMs Locally":

Now including image generation with Qwen3 and content classification from the Qwen3Guard Technical Report paper.

https://codeberg.org/thbley/talks/raw/branch/main/Run_LLMs_Locally_2025_ThomasBley.pdf

#llm #llamacpp #ollama #stablediffusion #gptoss #qwen3 #glm #opencode #localai

I have just written my first AI Agent in Python to use Ollama. I am a little embarrassed that I used Claude to help me write it, but hopefully I can now use it as a template for any others I need and stay local.

Ollama CLI cheatsheet: ollama serve command, ollama run command examples, ollama ps, and model management.

#Linux #Cheatsheet #Self-Hosting #LLM #AI #Ollama #DevOps #Python

https://www.glukhov.org/llm-hosting/ollama/ollama-cheatsheet/

I updated the slides for my talk "Run LLMs Locally":

Now including remote code execution with Skills, ascii smuggling and extended embeddings slide.

https://codeberg.org/thbley/talks/raw/branch/main/Run_LLMs_Locally_2025_ThomasBley.pdf

#llm #llamacpp #ollama #stablediffusion #gptoss #qwen3 #glm #opencode #localai #security

I've randomly encountered this blog: https://peter-nhan.github.io/posts/Local-Android-Ollama/

You can run #Ollama models on your #Android phone via #termux - So basically run a Chatbot on your phone!

It works great on my old OnePlus Nord, but it's extremely slow or not working at all on my Pixel 9 Pro running #GrapheneOS - does anyone know how to fix this?

My colleague Bauke Scholtz just released #OmniAI, a lightweight #Java library for working with different #AI providers (#OpenAI, #Anthropic, #Google, #Grok, #Meta, #Azure, #OpenRouter, #Ollama and a custom option). It's simple, #JakartaEE friendly (optional #CDI and #EL support), and has some nice features like summarization, moderation, and translation. Check it out! https://balusc.omnifaces.org/2026/01/one-api-any-ai.html

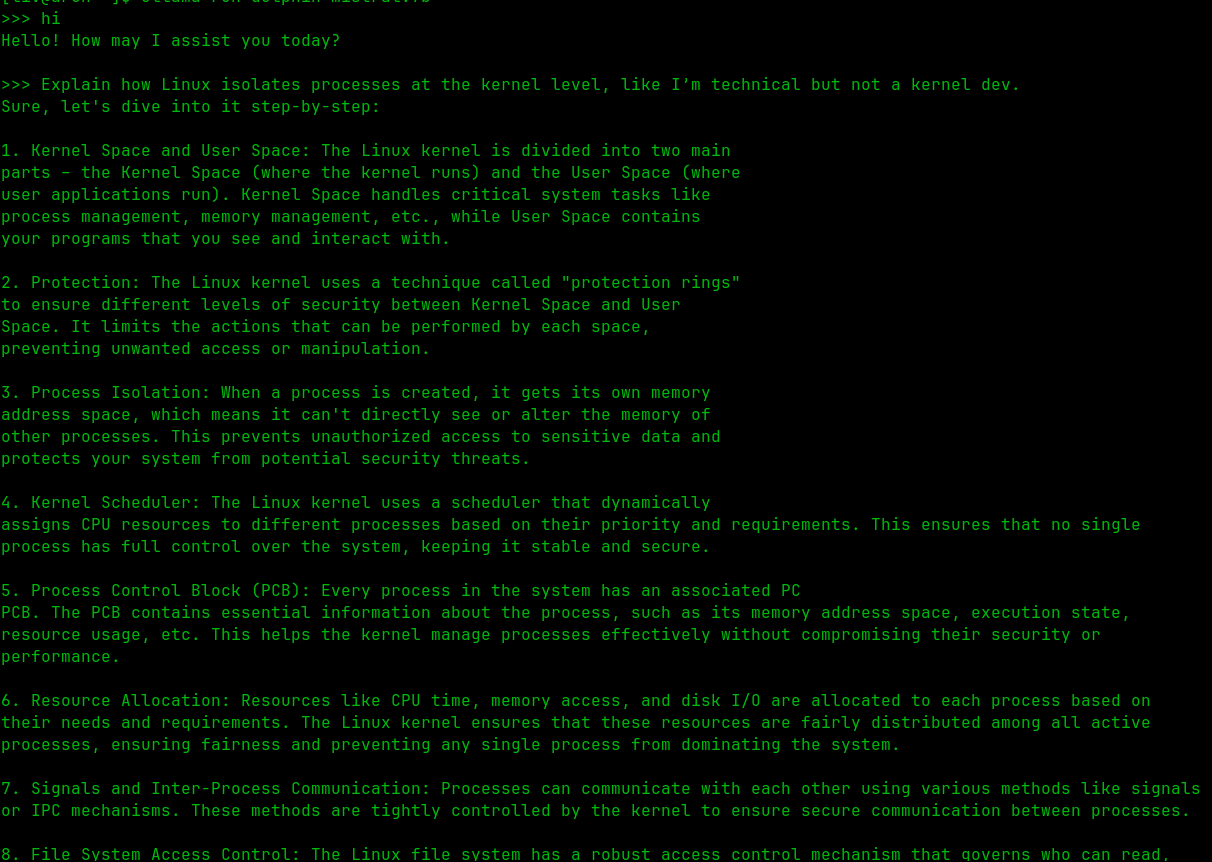

Lately I’ve been using AI locally instead of cloud stuff.

No internet needed, no accounts, nothing leaving my machine. Cloud AI is cool, but you share more than you realize.

Local just feels safer for me. Using models like dolphin-mistral:7b, llama3.1:8b, mistral:7b.